Outsourcing Humanity, One Click at a Time

From birthday texts to battlefield targets, the human part is what disappears first.

This issue of Social Signals was written to my go-to Get to Work playlist.

When you buy a refrigerator, GE does not really control what you put inside. That is more or less your business. Just don’t do anything illegal with their product, right?

That is not exactly how AI works, but it is getting close to the argument companies like OpenAI are making about military use, and the same one Blue AI could make about how I used an agent to automate parts of my life with AI.

“We just make the tool. We do not decide what people do with it.”

This week that line is getting a lot harder to accept. Because unlike a fridge, AI can be updated, guided, restricted, and shaped, which means the question is not just what the tool can do, but who is still responsible when the human parts start disappearing.

And when the people using the products get to shift the definition of “legal”? Well then we have a whole other issue entirely. Wrote about that this week. -Greg

The Human Loop is the Product

This signal is not just about military contracts. It is about how fast AI can remove humans from judgment loops, first in small personal ways, then in massive civic and military ones.

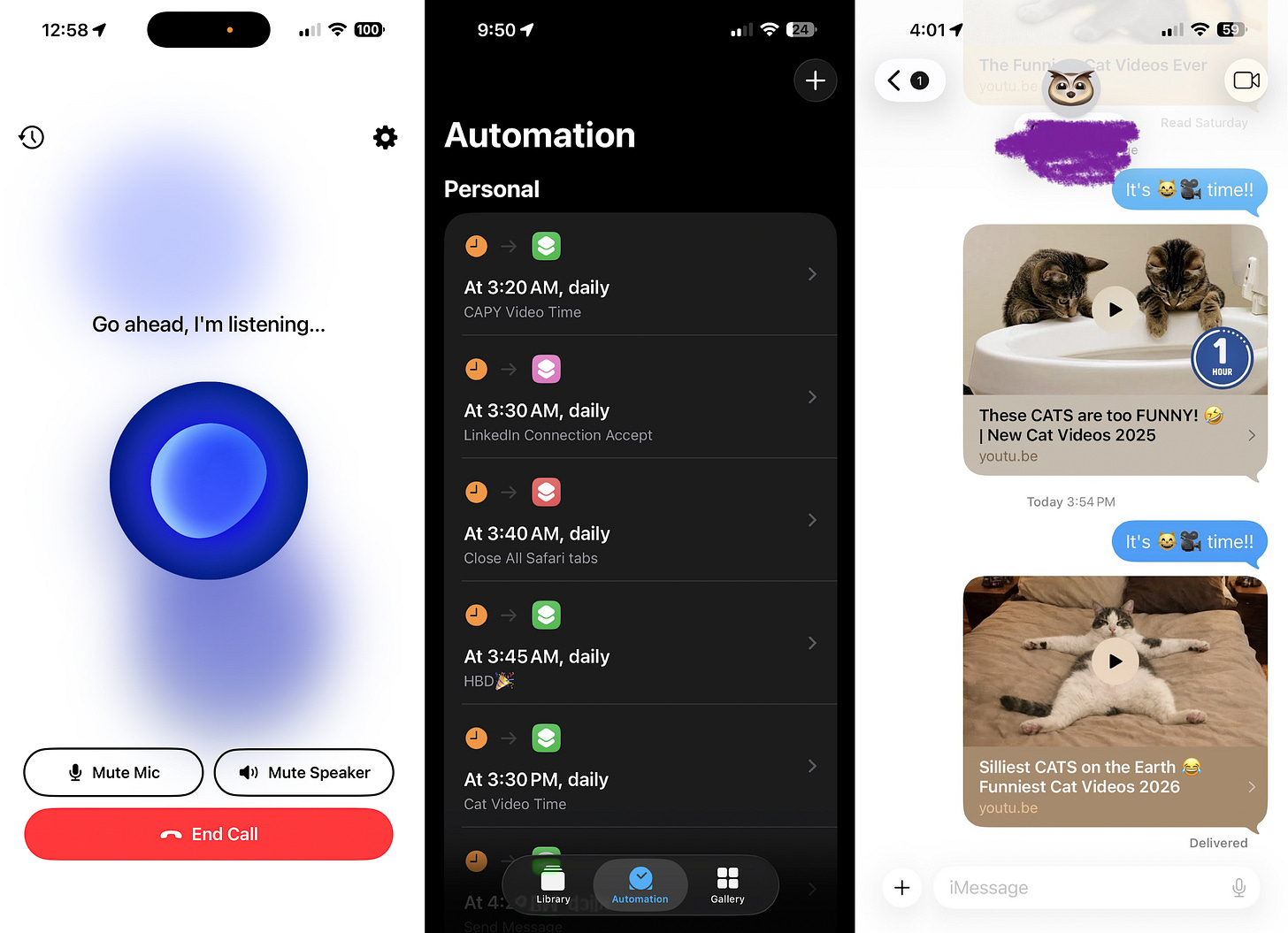

As I wrote about last week, I’ve been experimenting with an AI agent that can literally operate my phone like a thumb. It can wish people happy birthday, accept LinkedIn requests, clean up screenshots, close tabs, and send messages. And what I learned very quickly is that the easiest things to automate are often the exact things that carry human meaning.

It spammed a friend with a pre-roll ad instead of a capybara video, accepted LinkedIn connections you regretted, messaged long-lost contacts, and worst of all posted a birthday greeting to a deceased friend’s still-active Facebook page.

My point is not that automation is bad. My point is that some tasks are actually little containers for attention, judgment, memory, and care. Once you hand those over, efficiency goes up and humanity goes down.

If an agent can handle the mechanics of being a good friend, a good parent, or a good citizen, we need to ask what part of that job was the actual human part all along.

There are huge parallels between the tension of keeping the human stuff human with this and what’s happening with Iran today. And I learned that if we keep outsourcing the human parts, we risk losing them entirely.

Meanwhile, in Iran…

Anthropic publicly said it supports U.S. national security work and already deploys Claude in classified and defense settings, but drew two hard lines it would not remove from contracts with the Department of War: no mass domestic surveillance and no fully autonomous weapons.

Anthropic said the Pentagon demanded “any lawful use,” threatened to remove Anthropic from government systems, threatened to label it a “supply chain risk,” and even floated the Defense Production Act if Anthropic wouldn’t drop those safeguards. Anthropic refused.

THEN… U.S. agencies including State, Treasury, and HHS were directed to stop using Anthropic products, with State shifting its in-house chatbot to OpenAI.

Refusing the administration’s preferred terms didn’t just cost Anthropic one deal, it triggered a wider federal punishment campaign. This is a big signal, you guys.

OpenAI then announced a Pentagon agreement for classified deployments and said it shared similar red lines: no mass domestic surveillance, no directing autonomous weapons systems, and no high-stakes automated decisions without a human decision-maker.

OpenAI also argued its setup had “more guardrails” because it kept a safety stack under its control, used cloud deployment, and kept cleared OpenAI personnel in the loop.

BUT… Even in OpenAI’s own published agreement language, the Pentagon could use the system “for all lawful purposes,” and critics focused on the ambiguity inside words like lawful, oversight, and human control.

Casey Newton’s argument is basically that Anthropic asked for firmer contractual red lines, while OpenAI initially accepted softer language plus technical controls and interpretation. That matters because “lawful” can stretch very far in surveillance and military contexts.

AND THEN… After backlash, Sam Altman said OpenAI rushed the announcement and OpenAI amended the deal. The updated post now says the system “shall not be intentionally used for domestic surveillance of U.S. persons and nationals,” says commercially acquired personal data can’t be used that way, and says intelligence agencies like the NSA would need a new agreement to use its services.

Altman said the company was revising the deal to clarify those principles. So the current state is: OpenAI has moved closer to Anthropic’s language after public pressure, but the trust gap remains because the original agreement looked broader than the public messaging.

Signals from consumers

Consumers paying attention are pissed. They are treating this as a values moment, not just a product moment. That’s a big signal for tech companies.

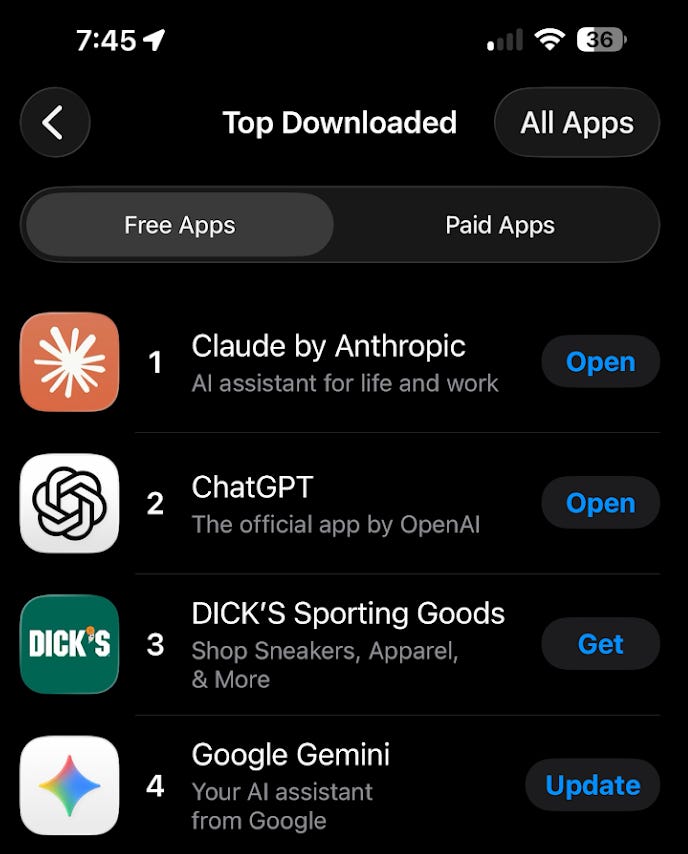

Millions of people are switching from ChatGPT to Claude this week.

Claude climbed to No. 1 in Apple’s U.S. App Store over the weekend.

ChatGPT uninstalls spiked 295 percent on February 28.

My entire Threads feed is full of tutorials of how to export your customization of ChatGPT into your new Claude

Anthropic rolled out tools that make switching easier, including free memory and an import tool that helps users bring context from other chatbots into Claude.

People aren’t only leaving ChatGPT. Anthropic is helping them migrate. And yeah, I’ve been learning and using Claude more because of all of this.

Signal for other companies

This is a flashing warning light for any tech company that thinks it can set ethical boundaries and still stay in the administration’s good graces.

The Anthropic episode suggests that if a company resists politically sensitive uses, it could face pressure well beyond one contract, including agency-wide bans, reputational attacks, and efforts to frame it as risky for government use.

The broader business signal is chilling: play ball or risk exclusion. That matters for AI labs, cloud vendors, cybersecurity firms, and any company whose product touches public-sector accounts.

This is certainly not the first blush of this wave. From DEI last year to conveniently not condemning ICE in Minnesota, companies are being trained (and seemingly rewarded) for being quiet on issues that effect real human lives.

Are the AI companies responsible for how you use AI? They say no…

In an internal all-hands meeting Tuesday, Sam Altman told OpenAI staff that while the company can build a safety stack and advise on where its models fit, it doesn’t get to make the military’s operational decisions. He says that once these systems are inside the machine, OpenAI may influence guardrails, but not the actual missions.

“So maybe you think the Iran strike was good and the Venezuela invasion was bad. You don’t get to weigh in on that.” - Sam Altman

So… ummm… “human in the loop” starts to sound a lot softer when the company itself is saying it can’t ultimately decide how the government uses the technology.

That pushes this beyond a contract fight into a bigger question about accountability: if AI is helping power surveillance, targeting, or military action, who’s responsible when the humans in charge stop listening to the humans who built it? This is scary stuff, you guys.

The two connected stories

My agentified phone experiment is the consumer-scale version of the defense story.

In my life, the human disappeared from birthdays, relationships, and judgment.

In the Pentagon story, the fight is over whether the human disappears from surveillance, targeting, and force.

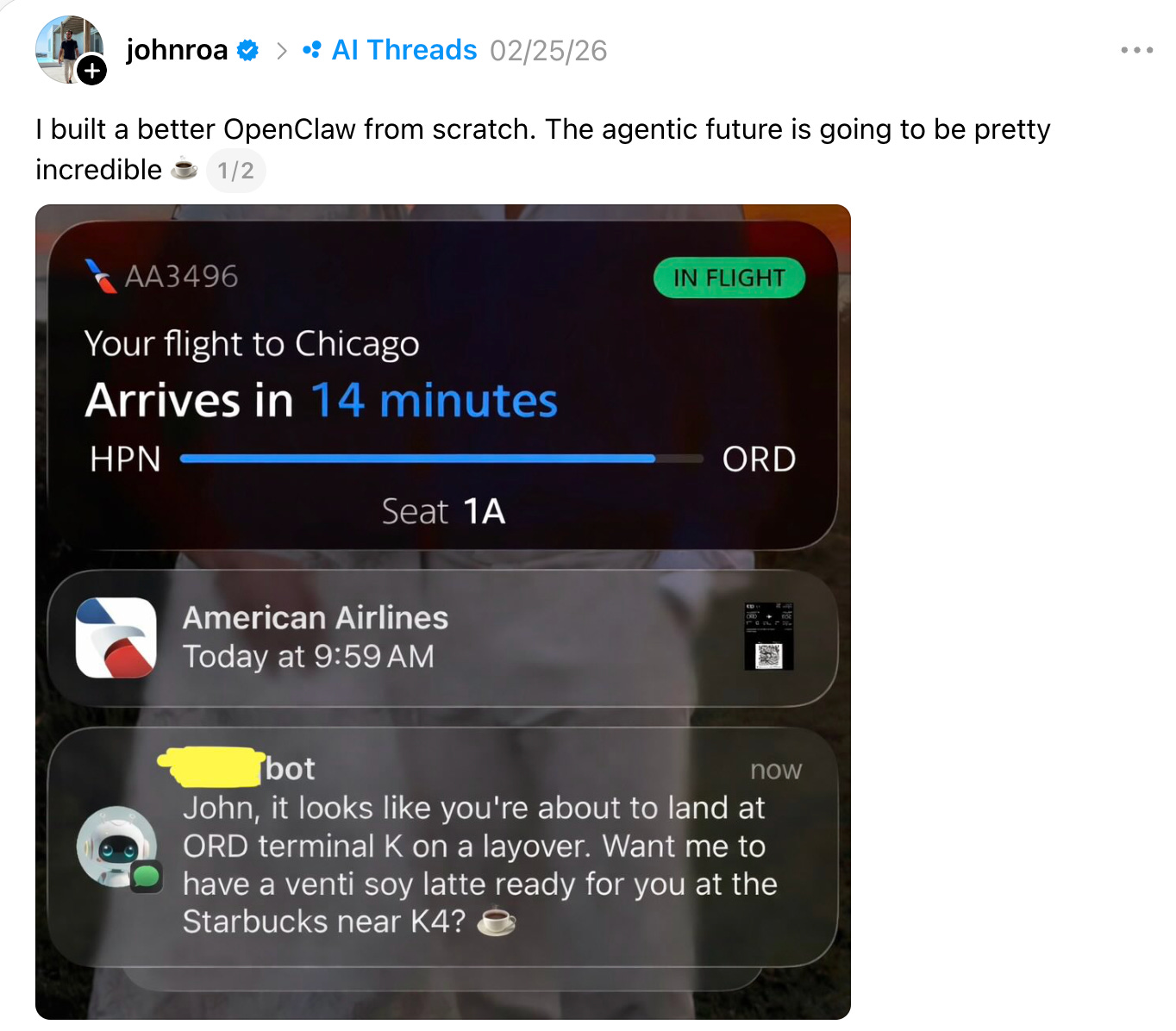

Social Signals readers know that I’m certainly not anti-AI. Anthropic itself says AI can and should support national security, and we have real examples where automated agents are useful, like travel booking, research, and doing my expenses at work.

The issue isn’t use. The issue is where the human boundary sits, who gets to define it, and how easily “lawful” becomes a loophole. Especially when our elected leaders view laws as open to interpretation.

The real question this week isn’t whether AI can do more and help humans. It clearly can. It’s going to help us automate very useful things!! Like this:

But… the question is where we still insist on keeping a human in the loop so it doesn’t harm humans.

Because once that loop is gone, getting our humanity back is a lot harder than automating it away. -Greg

🎙️Greg Swan Explains the Internet on AI for Life + War

For my regular segment on DriveTime with DeRusha, Jason DeRusha and I talked about all of the above!! PS: Be sure to check out: The DeRusha Download: official newsletter of Jason DeRusha

🎧 Greg on Vibes

Last week I was interviewed on D S Simon Media’s podcast, PR’s Top Pros Talk, about my new keynote and POV on How to message and market through the vibe-ified chaos of 2026: The signals shaping how people share, shop, and scroll. You can watch it above and about it on O’Dwyers here: How Vibes Shape Consumer Behavior. Big thanks to Doug Simon and Lynsey Stanicki for having me.

⚡️ Social Signals

The US Supreme Court declined to take up a case that could have expanded copyright protection to fully AI-generated artworks, leaving in place rulings that say copyright requires “human authorship.” The decision reinforces the Copyright Office’s position that images created solely by AI from text prompts aren’t eligible for copyright, even when a person built or directed the system.

I’ve got three smart glasses signals in a row here. Firs off, Casey Newton made an ad for Meta Ray-Bans that you actually want to watch.

Second, but now the platform that owns your social graph is now trying to own your shopping cart. Meta is quietly testing an AI shopping assistant inside the Meta AI web interface, and it’s the signal I’ve been watching build for a while. Meta arrives as the third major AI chatbot platform to integrate shopping research (ChatGPT, November 2025 and Google, January 2026). But Meta brings something neither rival can match natively: behavioral data from billions of users across Facebook, Instagram, and WhatsApp.

Third, the smart glasses pushback cycle keeps accelerating. A new Android app called Nearby Glasses constantly scans for Bluetooth signals from wearable devices made by Meta and Snap, alerting you if someone nearby might be recording you without your knowledge. The developer built it after reading reporting on how Ray-Bans were being weaponized to film people without consent. The fact that this app exists — and that people want it — reminds me of the Glasshole pushback of Google Glass in 2014. It’s a signal I expected to see.

The 184-year-old Cleveland Plain Dealer has started publishing AI-drafted stories, pairing reporter bylines with the label “Advance Local Express Desk” to indicate that the piece was written by generative AI and reviewed by staff.

Angela Benton wrote one of the most resonant pieces I’ve read in a while on why personal websites are replacing social profiles as the meaningful owned space online. The essay traces the 30-year pattern of the internet and argues that Web 1.0 is quietly coming back Substack.

Oops! A software engineer trying to steer his robot vacuum with a video game controller accidentally gained access to the live camera feeds, microphone audio, and floor plans of nearly 7,000 other DJI vacuums across 24 countries.

Threads added a spot to link your podcast directly in your bio. See it here. I added mine here!

Tweet of the Week:

Reel of the Week: Let’s give more compliments.

Insta of the Week: Skyscraper Guy. Exactly what it sounds like, and I cannot stop watching.

Crane Reel of the Week: This account. (h/t Jake Bullington)

Finsta of the Week: Instagram now has its own finsta. Meta continues to be the most interesting competitor to itself.

Theory of the Week: The GPS theory. A sharp framework on dependency and what happens when we outsource navigation — literal and metaphorical — to technology.

Study of the Week: AI does not reduce work; it intensifies it, says an 8-month study published in Harvard Business Review with a US tech company with about 200 employees found that AI use did not shrink work, it intensified it, and made employees busier.

Work of the Week: McD’s Ramadan campaign is genius.

App of the Week: the creators of Darksky launched a new weather app. It’s called Acme Weather and it will give you a push notification if there’s a rainbow outside or aurora borealis! Worth $25/year? For me it is.

AI Tool of the Week: Pomelli added AI photoshoot via Google Labs, where you start from a single image of your product and it creates a whole series of customized product shots from it.

TikTok of the Week: Freebird on ukulele

Thread of the Week: The TMNT personality test. I’m Donatello, btw.

See you on the internet! Keep going! ✨

Greg